HexaField

SSCCS Validation on a Hexapod Robot

Executive Summary

HexaField is a demonstration project that validates the core concepts of the State‑Space Composition Computing System (SSCCS) using an 18‑degree‑of‑freedom hexapod walking robot. The robot’s six legs, each with three independently actuated joints, provide a naturally high‑dimensional state space that makes SSCCS abstractions – Segment, Scheme, Field, Observation, Projection – directly observable in physical motion. The project delivers a complete open‑source software stack running on affordable hardware (Raspberry Pi 5, simple LiDAR, standard servos). HexaField proves that SSCCS is not merely a theoretical construct but a practical, reproducible computing paradigm for real‑world robotic systems.

Internal Architecture Flow

Why a Hexapod

| Criterion | Hexapod Advantage |

|---|---|

| Rich state space | 18 independent joints create a complex but still understandable multi‑dimensional coordinate system. |

| Structural variety | Multiple gaits (tripod, wave, ripple) serve as natural Schemes. |

| Sensor integration | IMU, LiDAR, and optional camera provide realistic Field inputs (slope, obstacles). |

| Physical projection | Joint torques, body posture, and walking speed are unambiguous Projections. |

| Low entry barrier | Consumer‑grade hexapod kits cost <$500 and can be upgraded incrementally. |

| Visual appeal | A walking robot instantly communicates “computation in action” to any audience. |

SSCCS Concepts ↔︎ Robot Mapping

| SSCCS Concept | Formal Definition | Robot Instantiation |

|---|---|---|

| Segment | An immutable coordinate point in a multi‑dimensional possibility space. | Each servo joint (6 legs × 3 = 18) – its angular position, velocity, and torque form a coordinate tuple. |

| Scheme | A static blueprint defining axes, relations, and memory layout. | A predefined gait pattern (tripod, wave, ripple) with fixed joint‑to‑joint adjacency (e.g., leg coordination rules). |

| Scheme Templates | Predefined topological patterns. | HexapodTripod, HexapodWave, HexapodRipple (as URDF + gait generator). |

| Field | A mutable constraint predicate C(s) and transition matrix T. | Dynamic parameters: payload weight, slope angle, speed priority, obstacle proximity. |

| Field Composition | Union, intersection, product of Fields. | Balancing stability and speed via two linked sliders (intersection). |

| Observation | The event Ω(Σ, F) that produces a Projection. | Real‑time sensor fusion (IMU tilt, LiDAR scan) + user input (sliders, voice). |

| Projection | The result P = Ω(Σ, F). | Resulting joint torques, body height, walking speed, or navigated path. |

| Structural Isolation | Immutability guarantees no interference. | Joints do not mutate independently; only the Field changes, and all joints recompute projections deterministically. |

Hardware Baseline and Upgrades

Starting Platform

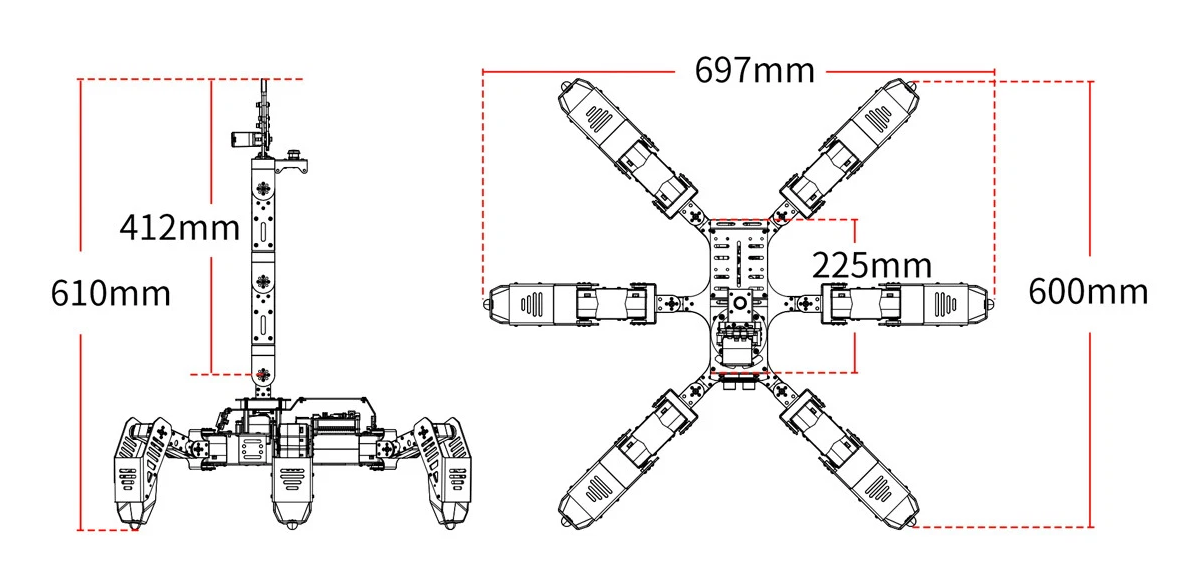

SpiderPi Pro: Typical 18‑DOF Hexapod Kit with Raspberry Pi 8GB on a custom carrier board.

| Component | Specification |

|---|---|

| Degrees of freedom | 18 (6 legs × 3 joints: coxa, femur, tibia) |

| Servos | 20 kg·cm digital serial‑bus (e.g., LX‑16A, STS3215) |

| On‑board computer | Raspberry Pi 4B (4 GB) |

| Sensors | 9‑axis IMU (MPU9250), ultrasonic distance sensor |

| Power | 2‑cell Li‑Ion (7.4 V) with 5 V step‑down for Pi |

| Software | Python control scripts, inverse kinematics, no ROS2 |

Mandatory Upgrades

| Component | Upgrade Part | Purpose |

|---|---|---|

| Processor | Raspberry Pi 5 (8 GB) + active cooler | Run ROS2, LLM, and control stack smoothly |

| LiDAR | YDLIDAR X4 (360°, 10 Hz, 12 m range) | SLAM and obstacle avoidance (Demo 3) |

| Servo power | 12 V 5 A step‑up regulator (MT3608) | Provide stable 12 V for high torque |

| Battery | 3‑cell Li‑Po (11.1 V, 2200 mAh) | Extended runtime, peak current |

| Communication | USB‑TTL adapter (CP2102) | Isolate servo bus from Pi |

| Cooling | 40 mm fan for Pi 5 | Prevent thermal throttling |

| Total mandatory |

Optional Upgrades

| Component | Upgrade Part | Purpose |

|---|---|---|

| Camera | Raspberry Pi Camera Module 3 | Vision‑based field detection (future extension) |

| Mechanical | Aluminium leg linkages | Reduce flex, improve repeatability |

Wiring Diagram

Software Architecture

The entire control stack runs on Ubuntu 24.04 + ROS2 Jazzy with a real‑time kernel.

Core ROS2 Packages

sudo apt install ros-jazzy-ros2-control ros-jazzy-ros2-controllers

sudo apt install ros-jazzy-joint-state-publisher ros-jazzy-robot-state-publisher

sudo apt install ros-jazzy-moveit2 ros-jazzy-slam-toolbox ros-jazzy-navigation2

sudo apt install ros-jazzy-rosbridge-serverCustom ROS2 Nodes (SSCCS Core)

| Node | Language | Responsibility |

|---|---|---|

hexapod_hardware_interface |

C++ (ros2_control) | Serial bus communication with 18 servos; joint state feedback. |

field_to_gait |

Rust (rclrs) | Subscribes to /field_params (slope, payload, speed priority). Computes gait parameters (step height, velocity, body offset). |

gait_generator |

Python | Inverse kinematics; publishes /joint_trajectory to ros2_control. |

llm_adapter |

Python | Receives /voice_command, prompts local LLM, publishes JSON actions to Nav2. |

Local LLM Integration (for Demo 3)

Model:

Phi-3.5-mini-instruct-Q4_K_M.gguf(2.2 GB) – 3.8B parameters, 4‑bit quantised.Engine:

llama.cppcompiled for ARM with BLAS.STT:

voskoffline English model (50 MB).Prompt template (forces JSON output):

System: You are a hexapod navigation assistant. Output only JSON. User: <speech‑to‑text> Assistant: {"action": "modify_nav2", "params": {...}}

URDF Model

A complete URDF defines 18 joints, each with limits, effort, and velocity. Transform tree: base_link → 6 coxa links → 6 femur links → 6 tibia links → foot frames. All transforms are published via robot_state_publisher.

Demonstration Use Cases

Demo 1: Segment Selection & Field Observation (CLI / config)

Goal: Show that changing a Field (payload) modifies Projection (joint torques, body height) while Segments (joint coordinates) stay fixed.

Setup: Robot on flat surface. Field parameters provided via command line or YAML config file (no GUI).

Steps:

- Launch the SSCCS control stack with default Field:

payload = 0. - Robot stands with nominal body height.

- User changes Field:

ros2 param set /field_to_gait payload 2.0. - Robot immediately lowers body by 2 cm; joint torques increase (observable via

ros2 topic echo /joint_states).

SSCCS lesson: Segment coordinates did not move; only the Field changed. Projection (body height, torque) changed deterministically.

Demo 2: Field Composition – Speed vs. Stability (CLI)

Goal: Demonstrate that composing two Fields (intersection) produces emergent gait behaviour.

Setup: Robot on a 15° wooden ramp. IMU provides slope angle.

Steps:

- Set Field A (stability) = 0.9, Field B (speed) = 0.1 → low‑amplitude wave gait, velocity 5 cm/s.

- Set Field A = 0.1, Field B = 0.9 → dynamic tripod gait, velocity 20 cm/s.

- Set Field A = 0.5, Field B = 0.5 → hybrid gait emerges, velocity 10 cm/s, step height 4 cm.

SSCCS lesson: Intersection of two Fields yields a third Field whose projection (gait) is not an average but an emergent property of the structural composition.

Implementation Roadmap

| Week | Milestone | Deliverables |

|---|---|---|

| 1 | Hardware assembly | Robot upgraded with RPi5, LiDAR, 12 V power; servos calibrated. |

| 2 | OS + ROS2 setup | Ubuntu 24.04, ROS2 Jazzy, ros2_control hardware interface. |

| 3 | URDF + TF | Complete URDF, transforms in RViz, joint state publisher. |

| 4 | Basic gait | Tripod gait in Python; robot walks on flat surface. |

| 5 | Field parameter node (Rust) | field_to_gait subscribes to /field_params, outputs gait parameters. |

| 6 | Demo 1 (CLI) | Change payload via ros2 param, observe body height change. |

| 7 | LiDAR SLAM | slam_toolbox running, map building. |

| 8 | Nav2 integration | Basic obstacle avoidance; robot navigates to waypoints. |

| 9 | Field composition (two‑parameter) | CLI with stability and speed; hybrid gait emergence. |

| 10 | Demo 2 (ramp) | Verify gait changes on 15° slope. |

| 11 | LLM server | llama.cpp + Phi‑3.5‑mini running, JSON output. |

| 12 | LLM → Nav2 adapter | Voice commands modify navigation. |

| 13 | Demo 3 (voice navigation) | End‑to‑end voice‑to‑nav, no manual intervention. |

| 14 | Documentation + release | GitHub repository, demo videos, README. |

Validation Process & Demo

Phase 1: Preparation (Weeks 1–4)

- Hardware Upgrade (RPi5, LiDAR, power)

- OS & ROS2 Installation (Ubuntu 24.04, Jazzy)

- URDF & ros2_control (18‑joint interface)

- Basic Gait Controller (tripod, wave)

Phase 2: Demo 1 – Segment & Field (CLI)

- Set Field: payload via CLI

- Observe body height change

Phase 3: Demo 2 – Field Composition (CLI)

- Place robot on 15° ramp

- Set stability & speed parameters

- Observe emergent hybrid gait

Phase 5: Finalisation (Week 14)

- Integration testing

- Demo video recording

- Open‑source release

Budget Summary

| Category | Item | Cost (USD) |

|---|---|---|

| Compute | Raspberry Pi 5 (8 GB) + active cooler | 95 |

| Sensor | YDLIDAR X4 | 80 |

| Power | 12 V step‑up regulator + 3‑cell Li‑Po | 40 |

| Communication | USB‑TTL adapter | 5 |

| Cooling | 40 mm fan | 10 |

| Mandatory total | 230 | |

| Optional | Camera module, aluminium linkages | 175 |

All other components (servos, frame, Pi 4B) are already part of the base robot.

Risk Assessment and Mitigation

| Risk | Probability | Impact | Mitigation |

|---|---|---|---|

| RPi5 overheats under LLM + Nav2 load | Medium | High | Active cooling; throttle LLM to <10% CPU; use 4‑bit model. |

| Servo bus latency with 18 servos | Low | Medium | Use 1 Mbps serial; split legs across two USB‑TTL adapters. |

| LLM response time >5 seconds | Medium | Low | Use TinyLlama (1.1B) instead of 3.8B; preload; performance governor. |

| LiDAR drift on slopes | Low | Medium | Fuse IMU with LiDAR; run Nav2 only on flat areas for demo. |

| CLI interaction overhead | Low | Low | Provide launch scripts with parameter presets; document commands. |

Conclusion

HexaField is a minimal but sufficient robotics‑domain demonstration project that validates SSCCS on a real physical system. The 18‑DOF hexapod provides a rich state space where every abstract SSCCS primitive has a direct, observable counterpart. The three demonstrations – segment selection, field composition, and LLM‑driven navigation – collectively prove that SSCCS can drive a complex, sensor‑feedback‑controlled robot with deterministic, verifiable behaviour. By releasing all hardware considerations, software, and documentation as open source, HexaField becomes a reusable reference project for the SSCCS.